Projects

Two things can be done in this section: you can read a few words about some projects I am or have been involved in, and send me any comment you want about them (or about another thing!). So, you can go to the comments' part or choose among the following topics:

- Wikiparable, corpora from Wikipedia

- TACARDI, Context-aware Machine Translation Augmented using Dynamic Resources from Internet

- OPENMT-2, Hybrid Machine Translation and Advanced Evaluation

- MOLTO, Multilingual On-Line Translation

- Machine Learning in Statistical Machine Translation

- COCO, the Text-Mess COrpus COmpilation

- Machine Translation for subtitles English-to-Catalan

- Descobrim l'Univers

Wikiparable, corpora from Wikipedia

Wikipedia is a very valuable source of multilingual information. Currently, the encyclopedia covers almost 300 languages and its structure with links among wiquipedias allows to relate the different editions of a same article. Although the percentage of overlaping articles among languages is very low (only 7% for the three largest editions: English, German and French), one can obtain comparable texts for a lot of languages.

Within this research line we work in three directions. On the one hand, we work on the acquisition of comparable corpora for specific domains, on the other hand in the extraction of parallel corpus from comparable corpora. Finally, we are developing a web interface to facilitate the enrichment of Wiquipedia: less complete editions can benefit from relevant information in other editions. To accomplish these purposes we use techniques from both information retrieval (crosslingual when necessary) and machine translation.

TACARDI, Context-aware Machine Translation Augmented using Dynamic Resources from Internet

With the main goal of achieving qualitative improvements in translation quality from the state-of-the-art MT systems, TACARDI focuses on the following two research lines:

Exploitation of new resources provided by the Internet. (i) Off-line enrichment of resources oriented to machine translation: for instance, the usage of comparable corpora automatically gathered from the Internet, and the collection of specialized lexicons using Wikipedia and its metadata (entities, multiword terms, categories, multilingual links, etc.). (ii) On-line gathering of multilingual information to improve translation, especially on unknown words: for instance by accessing sources of multilingual information that are updated very frequently (Twitter, Wikipedia, news, etc.).

Extension of the contextual information used in translation beyond the sentence. (i) Document level translation (not sentence by sentence). Interestingly, that would lead to global document translations showing better discursive coherence. For instance, by translating in a consistent way all the terms which co-refer in a document. (ii) Exploitation of non-textual meta-information available in documents. For instance by using thematic or domain labels, information extracted from the web links, or, in the case of text from software applications, the context in which it appears (translation can vary drastically if the text appears in a paragraph, a link, a button, or a menu). This research line could improve lexical selection and domain adaptation of current translation systems.

In order to evaluate the developments from the previous research lines the project will work with texts from three different application domains: Wikipedia articles, Twitter messages and software (localization and translation of user manuals). Translation tools have been applied already to these three cases, showing significant benefits. This project aims at providing improvements able to perform even a larger positive impact on MT in the near and mid-term future.

(From the Official Project Summary)TIN2012-38523-C02-00 (01/02/2013-31/01/2016)

MOLTO, Multilingual On-Line Translation

MOLTO's goal is to develop a set of tools for translating texts between multiple languages in real time with high quality. Languages are separate modules in the tool and can be varied; prototypes covering a majority of the EU's 23 official languages will be built.

As its main technique, MOLTO uses domain-specific semantic grammars and ontology-based interlinguas. These components are implemented in GF (Grammatical Framework), which is a grammar formalism where multiple languages are related by a common abstract syntax. GF has been applied in several small-to-medium size domains, typically targeting up to ten languages but MOLTO will scale this up in terms of productivity and applicability.

A part of the scale-up is to increase the size of domains and the number of languages. A more substantial part is to make the technology accessible for domain experts without GF expertise and minimize the effort needed for building a translator. Ideally, this can be done by just extending a lexicon and writing a set of example sentences.

The most research-intensive parts of MOLTO are the two-way interoperability between ontology standards (OWL) and GF grammars, and the extension of rule-based translation by statistical methods. The OWL-GF interoperability will enable multilingual natural-language-based interaction with machine-readable knowledge. The statistical methods will add robustness to the system when desired. New methods will be developed for combining GF grammars with statistical translation, to the benefit of both.

MOLTO technology will be released as open-source libraries which can be plugged in to standard translation tools and web pages and thereby fit into standard workflows. It will be demonstrated in web-based demos and applied in three case studies: mathematical exercises in 15 languages, patent data in at least 3 languages, and museum object descriptions in 15 languages.

(From the Official Project Summary)FP7-ICT-247914 (01/03/2010-31/08/2013)

OPENMT-2, Hybrid Machine Translation and Advanced Evaluation

The aim of the project OpenMT-2 is to foster research in Machine Translation (MT) technology in order to generate robust, high-quality combined MT systems and improved evaluation metrics and methodologies. OpenMT-2 builds on the previous research carried out in the framework of the 2006-2008 OpenMT project (TIN2006-15307-C03-01).

The research in OpenMT-2 would be carried out in 5 main areas: (i) Collection, annotation and exploitation of multilingual corpora, (ii) Further development of the current single-paradigm translation systems, (iii) Pre-edition, post-edition and system improving based on collaboration with a web2.0 community, (iv) Combining and hybridizing MT paradigms, and (v) Advanced evaluation for MT.

We will test the functionality of the new developed MT technology and systems with four different languages: English, Spanish, Catalan and Basque. Also, systems will be applied in different contexts (i.e., different corpora domains and genres).

The consortium is composed by two universities: University of the Basque Country (UPV/EHU), Technical University of Catalonia (UPC), and a non-profit research centre: Elhuyar. Several companies and foundations with activities in closely related areas will serve as supervision EPOs for the project: Eleka, Fundació i2CAT, Imaxin, Semantix, Translendium SL and eu.wikipedia.

(From the Official Project Summary)TIN2009-14675-C03-01 (01/01/2010-31/12/2012)

Machine Learning in Statistical Machine Translation

Statistical machine translation (SMT) is one of the most successful paradigms in machine translation, but it still shows some limitations. Since systems translate segment by segment (or phrase by phrase) they do not make use of all the information encoded in the sentence. Machine learning techniques can alleviate this limitation by classifying the translation of each segment according to its context (i.e. surrounding words) or the syntax of the sentence for instance.

We are nowadays working on the integration of both approaches. Every time the system chooses the translation of a segment it uses the features associated to every possible translation in order to make the decision. Among these features one can include from the standard probabilities in an SMT system, that is the translation and language models, to attributes describing the grammatical category of the phrase, the part-of-speech, the location within the sentence, the surrounding words, and so on.

As I've said somewhere else in the web, the research group in machine translation at NLPG is composed by Jesús Giménez, Lluís Màrquez and myself. You can follow the current state of our research in the wiki: EMTwiki!

COCO, the Text-Mess COrpus COmpilation

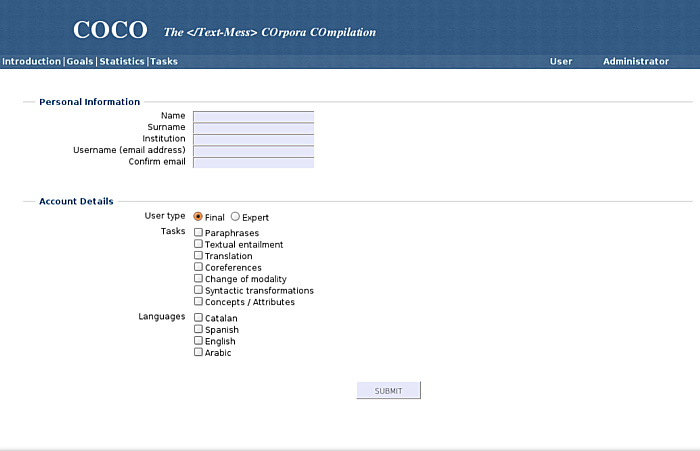

COCO is a web interface designed for knowledge acquisition coming from information introduced by volunteers, and it is a subproject within Text-mess. My contribution is the implementation of the interface, whose core uses MySQL and PHP. In the first phase COCO allows to deal with paraphrases corpora: modify, insert and validate pairs. If you want to be part of these volunteers, you can visit the web and collaborate! If you only want to see its appearance here you have some snapshots:

Soon the number of available tasks is going to increase so that it allows to compile several corpora including:

- Pairs of paraphrases

- Pairs of textual entailment

- Coreferences

- Change of modality of sentences

- Syntactic transformations

- Attributes from concepts

This job is being carried out jointly between the Departament de Llenguatges i Sistemes Informàtics at UPC (LSI) and the Centre de Llenguatge i Computació at UB (CLiC).

Machine Translation for subtitles English-to-Catalan

This project has been in my head for a while and the aim is that Catalan enthusiasts of films in original version can have the help of subtitles in their language or alternatively to ease the learning for non-Catalan speakers. Finding subtitles in the web is very easy for majority languages such as English or Spanish, but it is quite difficult for others like Catalan.

The main idea is to use a standard statistical machine translation system (Moses) to translate the subtitles into Catalan in an automated way. These systems are able to translate new texts using the information captured from already seen translations. In order to do the training process, one needs sentence to sentence aligned texts in both languages. If the fragments we want to translate afterwards belong to the same domain as these aligned training data, the translation will be in general acceptable.

On one hand, translating subtitles can be a hard task because sometimes sentences are too short. On the other hand, films or shows of a same topic share vocabulary and expressions, and this can ease the translation in some cases. A system which has been trained with the first three seasons of Prison Break, would be now translating correctly the fourth season but would not be able to translate accurately House M.D. for instance; a system only trained with all of Tim Burton's films would not be efficient when translating The Simpsons, etc. The key is then to have quite a large and variate initial database (corpus). Once one has at his disposal these data, the system can be widened and specialised for several genres in a straightforward way.

However, obtaining this initial corpus is not the only problem, one needs a one-to-one correspondence between the sentences in both languages, and this is not always the case. Nowadays I have about a hundred subtitles both in Catalan and English. With a mean of 500 lines per film it represents about 50,000 sentence pairs. This is quite a small corpus; even though, the process of taking care of the alignment is slow, and that is why for the moment the project is an idea yet...

Not to say that any help will be welcomed. If you have subtitle pairs, you can send them to me by email; if you want to collaborate aligning pairs there are several programmes which ease the work (Gaupol for linux or Subtitle Workshop for windows, for example). Contact me for more information!

Descobrim l'Univers

Descobrim l'Univers (Let's discover the Universe) is an activity included within the offers of popular science and education of the Centre d'Observació de l'Univers (COU) at the Parc Astronòmic del Montsec. It has been thought for students between 11 and 14 years who visit the COU and do their first approach to cosmology. It includes a file for the students and another one for the teachers which center on what is explained in a video 15 minutes long. In this section you can watch this video in Flash that I made with Andreu Balastegui. It explains the history of the Universe from the beginning of the expansion till today. The voice in off belongs to the journalist Pep Gorgori. Be kind, the video was made in 2004 and it was our first experience with Flash!

If you wanna entertain yourself, you can have a look at the files as well: