Research positions on LLM training

UPC/Qualcomm collaboration

Description of the domain

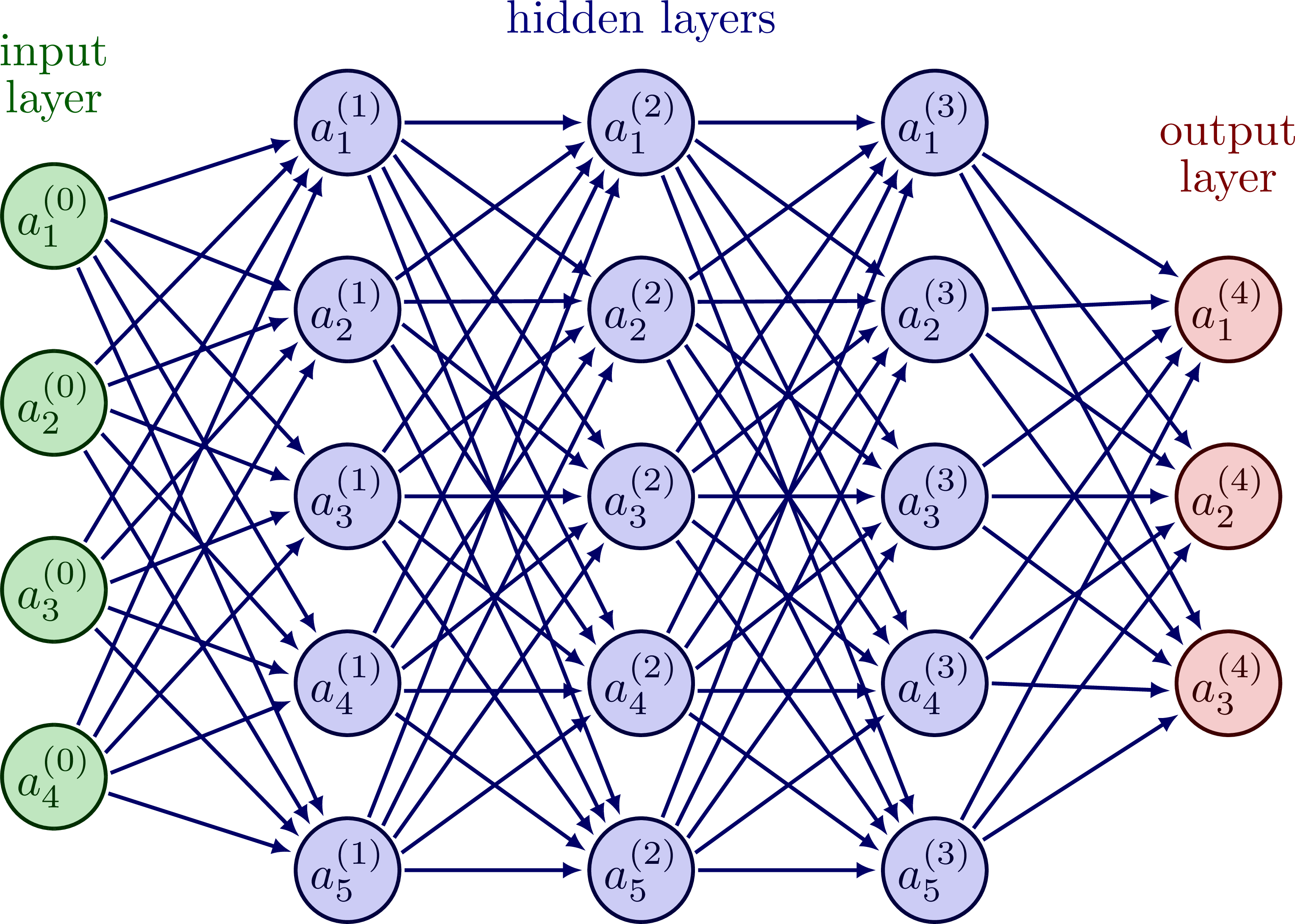

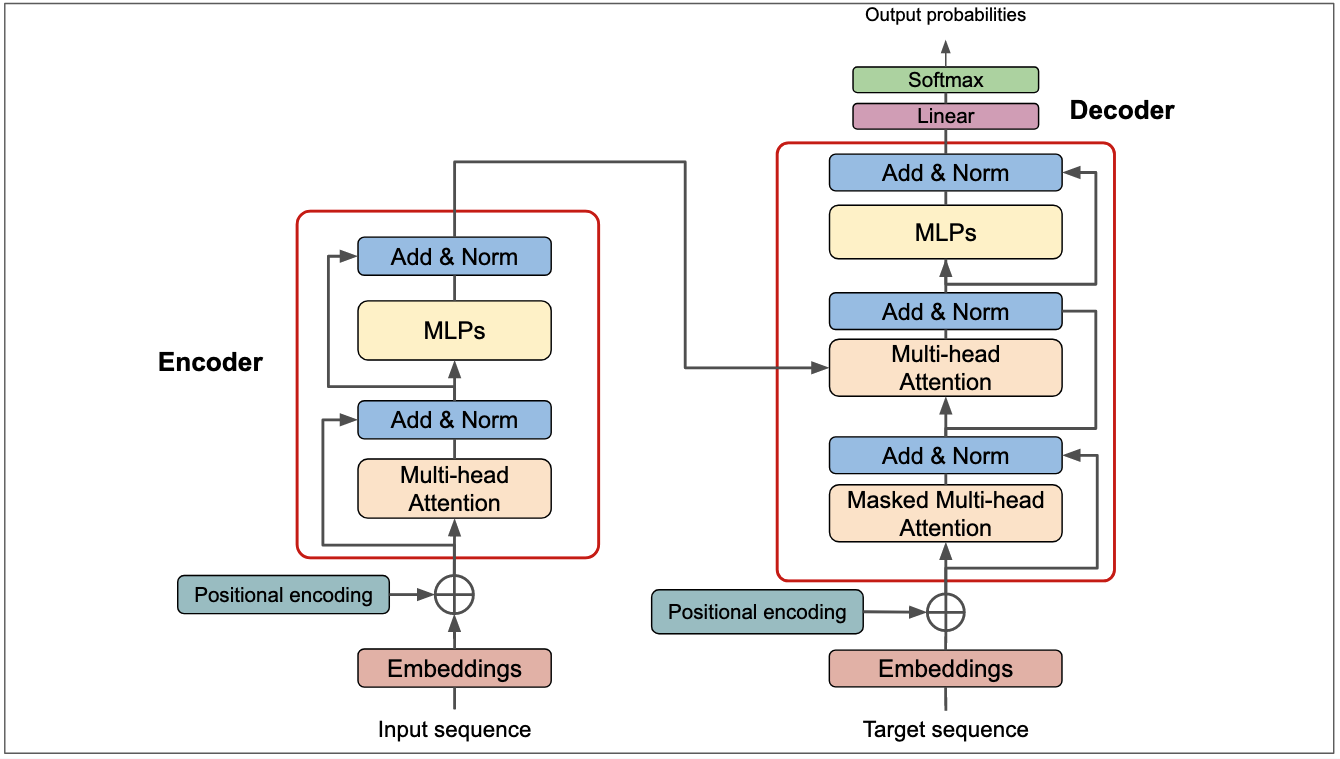

State-of-the-art models such as LLMs are too large to fit in a single compute node (GPU, NPU, CPU), both for training and inference on a device (e.g., phone, laptop, tablet) or in larger-scale data centers. There is a need to develop optimization techniques to split and place these models onto a distributed set of compute nodes so that the overall system performance is maximized. The research will be focused on optimizing the placement of AI models onto distributed systems considering training time, energy consumption, and computational resources. Explore the Qualcomm AI Hub.

Goals

Modeling and simulating distributed AI workloads using both mathematical frameworks and simulators. This encompasses the modeling of network, compute, and memory components within a distributed architecture. Developing new modules to enhance the modeling process. Evaluating and optimizing various parallelization techniques to improve overall system performance.

The Universitat Politècnica de Catalunya · BarcelonaTech offers Master thesis fellowships in the field of LLM training. The research will be supported by Qualcomm and will be carried out in an environment with a strong interaction with leading experts in the field, with opportunities for doing internships in the company.

Requirements

Students with a Bachelor degree and a strong background on Computer/Data Science and/or Mathematics are required, preferably with skills on:- Algorithms and data structures

- Mathematical optimization

- Machine and deep learning

- Oral and written English

Application

Students interested in this opportunity should contact Sergi Abadal.This research will be supported by the The Ministry of Economic Affairs and Digital Transformation and Qualcomm.